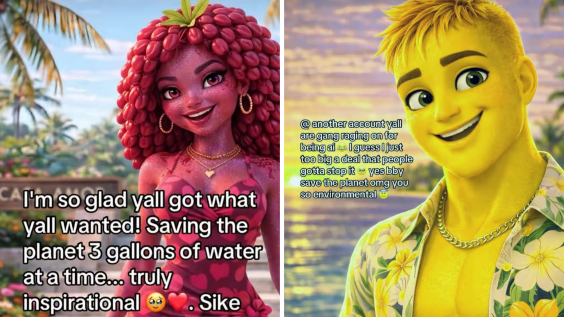

The creator behind Fruit Love Island—an AI-generated series about anthropomorphic fruit navigating romantic drama—rage-quit TikTok this week after the platform removed dozens of their videos. The account, which had amassed millions of views by flooding the platform with AI-generated episodes, posted a final message declaring "BULLYING WORKS" before disappearing entirely. What looked like a scalable content empire turned out to be a house of cards the moment TikTok's moderation team showed up.

The collapse was swift and total. One day, the creator was riding algorithmic momentum, pumping out multiple episodes per day of AI-animated fruit experiencing infidelity, betrayal, and reconciliation. The next, the account was gone. No pivot to YouTube. No defiant relaunch on Instagram. Just a deletion and a bitter exit note blaming "bullies" for the takedowns. It's the clearest evidence yet that AI content farms have no Plan B—because the entire business model depends on platforms looking the other way.

Fruit Love Island wasn't an outlier. It was part of a growing ecosystem of AI-generated serialized content designed to exploit TikTok's recommendation algorithm without requiring traditional production infrastructure. These accounts operate on volume: generate dozens of videos per week, let the algorithm surface the ones that hit, iterate based on engagement metrics. The content itself is secondary to the distribution system. Which is why the model collapses the moment that distribution system imposes any friction at all.

TikTok's takedowns weren't even a comprehensive crackdown—just a targeted removal of videos that likely violated community guidelines around spam or inauthentic content. But that was enough. The creator didn't attempt to appeal, didn't argue fair use, didn't rebuild with a new strategy. They just left. Because when your content strategy is "generate faster than moderators can remove," losing that race even once means the game is over.

The "BULLYING WORKS" framing is telling. The creator positioned mass criticism and platform enforcement as equivalent forces—both external threats to their operation rather than consequences of producing low-quality, algorithmically optimized spam. It's the same rhetorical move AI-generated fruit infidelity dramas themselves rely on: emotional intensity as a substitute for substance. The content farms that produce this material understand engagement mechanics but not editorial judgment. They know how to trigger the algorithm but not how to build an audience that will follow them across platforms or defend them when moderation arrives.

This is the structural weakness at the center of AI slop's business model. Traditional creators build audience loyalty, diversify platforms, and develop IP that can survive a single account suspension. AI content farms do none of that. They're entirely dependent on algorithmic distribution within a single platform, which means they're one moderation wave away from total collapse. The Fruit Love Island creator had millions of views but zero leverage—because those views were never attached to a brand, a voice, or a creative vision anyone would follow elsewhere.

The speed of the collapse also highlights how little infrastructure these operations actually have. A traditional production company facing takedowns would consult lawyers, file appeals, adjust content strategy, or move to a different platform. The Fruit Love Island creator just quit. Because there was nothing to salvage. The account wasn't a business—it was a loophole. And once the loophole closed, there was no underlying operation worth preserving.

What's most revealing is that this happened on TikTok, a platform famously permissive about AI-generated content and algorithmic gaming. If Fruit Love Island couldn't survive TikTok's relatively light-touch moderation, it's hard to imagine AI slop thriving anywhere with stricter standards. YouTube's copyright system would shred these accounts. Instagram's engagement algorithms reward established creators. Even Twitter, for all its chaos, has community notes and quote-tweet ratios that expose low-effort content. TikTok was the best possible environment for AI content farms—and it still wasn't sustainable.

The Fruit Love Island implosion is what happens when platforms stop treating AI-generated spam as a neutral content category and start enforcing quality thresholds. It won't be the last. As AI tools chase enterprise revenue instead of consumer creativity, the gap between what AI can generate and what platforms will distribute is only going to widen. The content farms that built their operations on algorithmic permissiveness are about to discover that "bullying"—or as everyone else calls it, moderation—works better than they thought.